When getting started on a new project, it's hard to be sure everything is accounted for. We have to consider workload, transaction mix, network overheads and latencies, and the ordinary chaos of a web-based architecture. When we shift our focus a bit, it's clear that "speed" is one aspect of the more inclusive concept of scale. As with deploying application software, we have to be sure we're asking the right questions. Test cases include " Is the channel on a published nautical chart?" and "Are the marks in good repair?" and "Is the charted depth adequate?" and "Is there anything in the way?" Any failure for these test cases, and we have to turn the boat around and head for deep, safe water.įor a big sailboat, the question isn't "How fast?" It's more like "How far?" It's a different performance question, related to destinations, more than speed. These criteria are essential, and often established by government agencies like the US Coast Guard. But you don't sail a 42' sailboat that's your home into anything without a clear, deep, wide, and marked channel. You can find lots of cool, secluded places, and do some serious birding. I don't want to label exploration as wrong, but I want to distinguish open-ended exploration from a test case with a defined finish line.Įxploration is why poking around in a kayak or stand-up paddleboard is fun. They preferred the data gathering, and engineering aspects of a more exploratory approach. I've worked with folks who didn't like reducing performance or scalability to a few pass/fail test cases.

I want to call this testing to distinguish it from the less constrained exploration. Set levels for a workload and targets for performance to see if the application meets the performance requirement under the given workload.Run the application or service or platform at increasing workloads until performance is "bad." I'm going to call this exploration.This leads to several approaches to scalability testing. It seems to be more important than simply trying to measure speed. This should lead to the pretty obvious question of "What scale are we talking about?" We have built an application and now we want to be sure it will perform well at scale. We often start our Python performance journey from a safe harbor. From there, we’ll be able to move into a method measuring Python application performance. I’d like to start by looking at the goals of performance testing, and why it's sometimes hard to ask appropriate questions. Why use the Gherkin language? I think the language for describing a test applies to many test varieties, including integration, system, acceptance, and performance testing. We’ll start the process with a definition of some use cases, then formalize them in Gherkin, review them with stakeholders, implement them in Python, and finally, make the whole thing an ongoing part of our deployment process. I think it’s helpful for Python applications, where performance is often brought up, but it applies widely. In this post I want to outline a five-step process to managing performance testing. Narrowing the conversation to only talking about performance can miss the additional benefits of a language like Python. When looking at Python, we have to be cognizant of Python as a package deal: it starts with a low barrier to entry, and navigates through an easy-to-use language, and folds in a rich library of add-on packages. Additionally, we should consider asking about simplicity and maintainability. Further, I want to suggest that questions about volume of data and scalability are also important, and sometimes overlooked. I want to suggest that Python’s performance, like a boat, is intimately tied to configuration choices - i.e., sails - and specific use cases - i.e., wind direction. There are hard lessons to be learned when talking about performance of sailboats. Failing to ask the right questions can leave you high and dry. And even the "Where can it go?" question turns out to be more complex than it seems at first. The use cases for a big, live-aboard sailboat are focused on where it can go, not how fast it gets there. What’s perhaps more central here, is any question about "speed" is not necessarily the right question to ask.

When we add libraries like numpy and Dask into the mix, what are we really measuring? What should we be measuring?

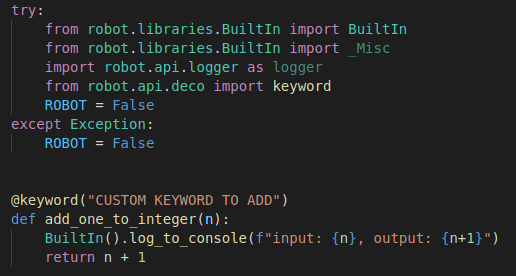

Python test framework software#

Nor is there one when writing software in Python. There's no simple "it goes this fast" for boats. It's not that I wanted to be evasive, but measuring performance is nuanced. So answering "it depends" always felt awkward. It also varies with the sails being used, weather and sea state, and the relative angle between boat and wind. It's a sailboat, meaning the speed varies - to an extent - with the wind. A question that comes up is "How fast does it go?" It's a pleasant enough question with a tricky, evasive-sounding answer.